Antifragility and the year of the cut

Antifragility and the year of the cut

Hackers expose your secrets on the one hand, but on the other hand they cause flow of information to thrive. Utilizing hackers is the only way to make your organization stronger, not trying to stop them, as embracing the randomness, chaos and uncertainty is the only chance of survival in these uncertain times. This is the conclusion derived from the latest work on antifragility by one of the most important influential thinkers of the last century.

By Eh'den (Uri) Biber CISM/CISA/CISSP/CRISC, member of the NeuroLeadership institute.

In April 28, 1987 one American’s death has made front page headlines all around the world. He was not a politician, nor rich, nor famous before he died – just a mechanical engineer. What made his death so famous was the fact the he was killed in Nicaragua by anti-government Contra rebels that were supported by the US government, while working on a small hydroelectric dam project in the north part of the country. This event brought to light Ronald Reagan administration’s policy at that time, one that supported anti-left movements and regimes around the world regardless of their ethical stand.

One of the people who were touched by the story decided to write about it, and so the story of the person, Ben Linder, was forever engraved into our memory via the beautiful words of the song “fragile” that was released by sting in his album “ ...Nothing Like the Sun”.

[youtube=http://www.youtube.com/watch?v=fJJQ2O4qBAM]

On and on the rain will fall

Like tears from a star, like tears from a star

On and on the rain will say

How fragile we are, how fragile we are

[Sting, “fragile”]

The sting Album was released in Tuesday, October 13th 1987. Less than a week after the album was released, on Monday the 19th of October the stock markets all around the world have crashed. In what was known as “Black Monday” the world finance markets went down without any warning. By the end of October, stock markets in Hong Kong had fallen 45.5%, Australia 41.8%, Spain 31%, the United Kingdom 26.45%, the United States 22.68%, and Canada 22.5%. New Zealand's market was hit especially hard, falling about 60% from its 1987 peak, and taking several years to recover (info: Wikipedia)

Yet the day the markets crushed marked the day a young investment trader became financially free. That person published a book 20 years later which became a bestseller - one that the Sunday Times called “one the twelve most influential books since World War 2”. The book title became engraved as an expression which is part of human consciousness; the man is Nassim Nicholas Taleb, and the name of the book he wrote: “The Black Swan”.

The Black Swan

To those who didn’t read Taleb’s book (seriously?), here is an explanation to the title: For many centuries most humanity was sure black swans were considered to be non-existing. That belief was coined in Latin as "rara avis in terris nigroque simillima cygno" or “rare bird in the lands, and very like a black swan." It was only in 1697 when a Dutch expedition discovered black swans in Western Australia, and that transferred the meaning of the term “black swan” into a description of what seems to be impossible but at a later stage proven as true.

According to Taleb (taken from Wikipedia):

1) The disproportionate role of high-impact, hard-to-predict, and rare events that are beyond the realm of normal expectations in history, science, finance and technology

2) The non-computability of the probability of the consequential rare events using scientific methods (owing to the very nature of small probabilities)

3) The psychological biases that make people individually and collectively blind to uncertainty and unaware of the massive role of the rare event in historical affairs

Taleb said that almost all major scientific discoveries, historical events, and artistic accomplishments were "black swans”, meaning they were all unpredicted and non-direct. In his book he argued that World-War I, September 11, the personal computer revolution and the Internet were all black swan events, and since his book was published a year before the financial crash of 2008 it turned Taleb into some sort of a modern prophet. NASA invited him to talk about how to identify risks in human missions to the moon and beyond, he’s given talks about risk models for the US department of defense, and in Britain his work is regarded so strongly by the current government it is considered to be a must reading. Professor Taleb work was one of the main reasons the British Prime Minister Cameron was rejecting the European Commission plan to have a central state control and planning.

Fragility, antifragility and robustness

For all those born beneath an angry star

Lest we forget how fragile we are

[Sting, “fragile”]

On December 1st, 2011, in front of a room packed with people Taleb came to speak about the subject of his new upcoming book at the Royal Society for the encouragement of Arts Manufactures and Commerce (AKA – RSA). The name of his talk was “The Predictability of Unpredictability”. (Taleb’s first book was called “Fooled by Randomness”)

Taleb new work focuses on the subject of what he calls antifragility, which is the opposite from fragility. The RSA talk was actually a very short talk by Taleb and a longer Q&A session, and from now everything below is based on all the sources of information that Taleb had written on the subject in the last few years. I’ve consolidated it as much as I can, and incorporated my own ideas on the subject of antifragility. Enjoy my hack :)

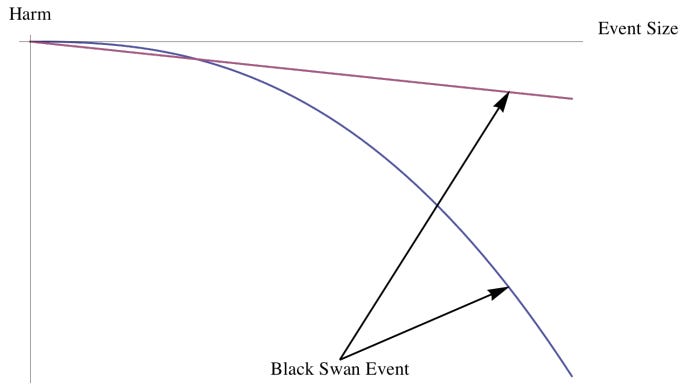

So first, let us describe what is fragile. Fragile is something that is both unbroken and subjected to nonlinear effects —and extreme, rare events of large size (or high speed) are rarer than ones of small size (and slow speed). Take for example earthquakes – every year we have about 3 million earthquakes which are below 2 on the Richter scale, and they do little harm. If we get an earthquake larger than 6 we hear about it everywhere because the consequences are horrific. For the fragile, the cumulative effect of small shocks is smaller than the single effect of a large shock. Remember - fragile hurts a lot more due to extreme events. In IT security, for example, a fragile system could be your firewall logging – it is supposed to document predefined events based on various criteria. For as long as you don’t experience DDOS your OK (to my non-IT-security-reader, distributed Denial Of Service is an attack in which huge amount of computers over the internet try to connect to a website at the same time, causing it to be unable to respond). However, a DDOS turns your logging system into useless, because you either will be lacking the disc space to write all the events so your system will either crash, or become useless by re-writing over previous log entries.

Now let us describe antifragile. For the antifragile, shocks bring more benefits (and equivalently less harm) as their intensity increases - up to a point. Going to the gym puts stress on your body – but it makes your muscles grow. Learning a new language “stresses” your mental capability but by doing so it improves your brain (again, up to a point). In the IT security world, an anti-virus client that detects a strange behavior at a client and reports it back to a centralized place is utilizing that information to make other nodes (computers) more protected. The antifragility motto could be described as - “What doesn’t kill me makes me stronger”.

Now what about robustness? Isn’t a robust system is anti-fragile? Not exactly explain Taleb. Robust systems are shock resistance, but they do not gain anything from it. A robust system does not change after a shock; it simply keeps its previous state, robust system experiences small or no variations through time, but they not grow. For a system to be robust, all risks must be visible and out in the open.

So the main difference between robust and antifragile is that robustness does not and cannot gain from unexpected events, while antifragile systems thrive on them. If we use the Greek mythology as a source of example, the sword that was hanging above Damocles using a single hair from a horse tail was describes fragility (AKA the sword of Damocles). A phoenix will forever remain as a Phoenix; it will never evolve or will become stronger after it will reborn from its ashes. But when Hercules second labor was to kill hydra he discovered that cutting her head made two popped out instead. The phoenix is robust, hydra is antifragile.

Antifragility is important because it is behind it anything that changed with time: evolution, culture, ideas, riots and revolutions, political systems, technological innovation etc. After every unexpected event (AKA black swan) there were systems that gained from the shock, while others that collapsed. Those who collapsed were fragile; those who prevailed were the antifragile systems. The counter side of antifragility is fragility, because fragility doesn’t like randomness, uncertainty, errors, etc.

If I got Taleb correctly, robustness is the linear, while the curve is everything else...

The effect of the speed & optimization

According to Taleb the problem is that the more optimized your systems become, the faster you move, and when you move so fast your crash is so horrible that the costs of recovery becomes huge. The bigger, more “optimized” and “redundant free” you try to become, the more fragile you become, and the bigger the fall you will experience when it will come, and Taleb strongly use the word “when”, not “if”.

If you drive a car, and you’re about to hit a wall while driving a car, there is a major difference if you’re going to hit it in 100 miles per hour or you’re going to hit it in 0.1 miles per hour. Same for jumping down 100 meters - If you do it in one go you will break every bone in your body. If you will do it in one million small steps you would not feel it. If you crash into a building with a car driving 100 miles per hour you would probably not survive, if you drive the same car at the speed of 0.1 miles per hour, most chances you will be ok. Speed has a huge impact on the impact of black swans - the more optimized your systems become, the faster you move, and when you move so fast your crash is so horrible that the costs of recovery becomes huge.

Crash Test Dummies

[youtube=http://www.youtube.com/watch?v=vIbcqgXh5-4]

Because fragile systems fear so much randomness those systems tend to be designed in a top down structure, with a lot of thought being invested into them, but since there are always invisible and unpredictable events those systems tend to expose themselves to the harm of black swans. When an unplanned negative event occurs, the result to it counters all the benefit that the design was supposed to provide in trying to prevent it.

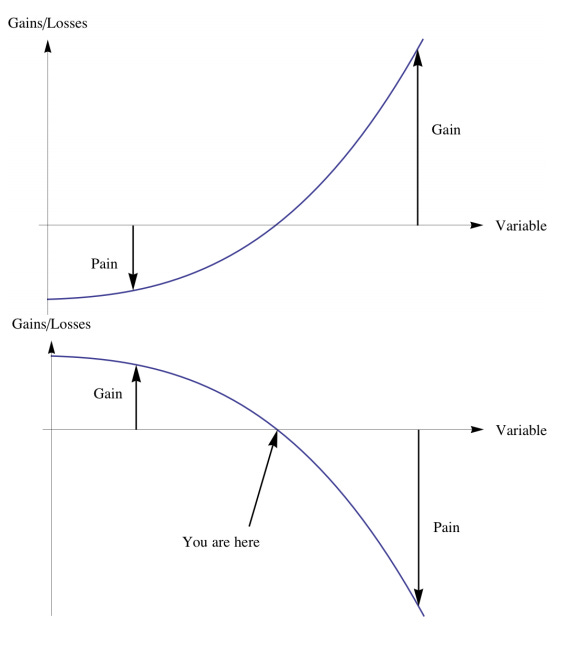

Before I would continue, here is a simply way to describe a very important aspect in risk – convex and concave. The convex (on face the left) is antifragile; while the concave (the fact on the right) is fragile (has negative convexity effects)

To understand the difference a black swan impact on convex vs. concave (negative convex), see below the example of Gain vs. Pain - convex, antifragile is the upper vs. concave (negative convex), fragile is the lower.

Fragile systems are prone to negative asymmetries, negative convexity effects. Take for example projects, or flights – both extremely complex and [theoretically] optimized processes, and almost always any unplanned negative event end up causing horrible delays. Increasing uncertainty in the system causes an augmentation of mostly (sometimes only) negative outcomes. However antifragile is prone to positive asymmetries, positive convexity effects. Increasing randomness and uncertainty in the system raise the probability of very favorable outcomes, and accordingly expands the expected payoff. Let me give you an example to antifragility – hackers usually don’t really mind the reward they are being offered, they do it for the sake of doing, and negative “counter attacks” actually makes them even more upset.

After 20 years of working in IT, I found professor Taleb argument to be extremely correct. I was fortunate to work in some of the most advanced redundant IT environments; some of the most secured IT environments, and some of the most regulated IT environments out there - all of which failed at some point. Sometimes it was a human mistake; sometimes it was a hardware failure, sometimes software problem, and sometimes – a failed security mechanism. Sometimes – we didn’t even know why our systems failed. At the end of the day, what matters most is the impact – and the impact of serious failures in those extremely optimized systems was always huge.

Give me some skin

[youtube=http://www.youtube.com/watch?v=1SLrC206-sM]

If you wonder why failure occurs in fragile systems, Taleb has an answer. In recent interview Taleb gave he was as direct as always: "The fragility of the whole system comes from two elements: the ability to hide the risks, and the fact that people have no 'skin in the game’ ".

Anything organic benefits and needs randomness, it makes it grow. The problem is that when a system is growing – the system starts to become inorganic. The bigger the system is the most chances that there would be some people who will not have any direct pay in whatever their decision or participation will bring, or as Taleb calls them “people with no skin in the game”. The people with “no skin in the game” keep the result of successes, transfer the failures down on others and assign their own risks to others. There would be those people who “have skin in the game”, who keep their own failures and take their own risk. And finally, there would be those who will have their soul in the game – those are the people that carry other’s downside on their shoulders, and give their upside to others.

Here is a table that Taleb provided on the subject, with one small addendum I’ve added :) NO SKIN IN THE GAME SKIN IN THE GAME SKIN IN THE GAME FOR THE SAKE OF OTHERS, OR SOUL IN THE GAME (Keeps upside, transfers downside to others, long a hidden option at someone else’s expense) (Keeps his own downside, takes his own risk) (Takes the downside on behalf of others, or universal values) Bureaucrats Citizens Saints, Knights, Warriors, Soldiers, Saints Cheap talk ( “tawk” in Fat Tony’s lingo) Actions, no tawk Expensive talk Consultants, sophists Merchants, Businessmen Prophets, Philosophers (in the pre-modern sense) Businesses Artisans Artists Corporate Executives (with suit) Entrepreneurs Innovators Theoreticians, data miners, observational studies Laboratory and field experimenters Mavericks Centralized government Government of city states Municipal government Editors Writers Great writers Journalists Speculators Those journalists who expose frauds (powerful regimes, corporations) Rebels Politicians Activists Dissidents, Revolutionaries Bankers Traders (own funds) (They would not engage in vulgar commerce) Fragilista Prof. Dr. Bernanke,... (most academics and other halfmen) Fat Tony Nero Tulip Risk Vendors Taxpayers (not quite voluntarily soul in the game, but they are victims) IT Security personnel Hackers

Intellectual nightmare

People look at systems and love to imagine that if they will optimize them they will become better (this is true for IT, financial, governmental, and organizational systems). If you dare to disturb their utopian dream that optimization means a better future you will be hammered (AKA “shoot the messenger” syndrome). Surprisingly and contrary to common belief, intelligence doesn’t really help here - Taleb argues that the more intellectual you are, the more you fall in love with the theory your brain gives to reality (AKA perception) and that makes it dangerous because you are becoming blindfolded from the uncertainty of reality. In his lecture he called it “denial of antifragility”, but Lao tzu already told us about it 6 centuries before the birth of Christ in his “Tao Te Ching”:

The Way that can be told of is not an unvarying way;

The names that can be named are not unvarying names.

It was from the Nameless that Heaven and Earth sprang;

The named is but the mother that rears the ten thousand creatures, each after its kind.

In my career I was involved in endless amount of projects - I was a project leader, technical lead, member of the design team, member of the implementation team, or just a person who was informed of the project. In all those projects I did what I did ever since I was a child – I challenged “the system” when it came to the pre-assumptions – especially with regards to risks. I can sadly say that if you think only in school teachers do not like the kid who ask too many questions, wait till you meet some managers who try to pretend their baby – AKA the IT system they are in charge of. I remember once a conversation with an extremely bright manager who was supposed to review the design I made of a system. As I was I explaining to him the controls I’ve implemented for all possible problem that I could think of he looked at me puzzled and said “Uri, I’m not going to approve it, this is too much, and we never had the problems you talk about”. My design was rejected, and at the end the project ended a year later than expected due to the fact that many of the problems I was afraid of occurred and the more “standard, simplified design” was chosen which had no controls to cover them.

Let the Chaos begin

“Small differences in initial conditions yield widely diverging outcomes for chaotic systems, rendering long-term prediction impossible in general”

”In the Wake of Chaos: Unpredictable Order in Dynamical Systems”, Stephen H. Kellert, University of Chicago Press, 1993.

Models and tools used for measuring risk-taking eventually lead to higher levels of risk. Here is an example Taleb gave: Suppose you're flying from Israel to Cyprus and the pilot says:" Well, I don’t have a map of Cyprus, but I have a map of Tokyo. I don’t have anything better but that’s better than nothing '. What will you do? Probably go off the plane. Now, when I tell people who teach risk that they use the wrong methods, they tell me: 'you are talking high Professor Taleb, but you never offered us a method of your own’. To me, it’s like stepping over to the cockpit and after you tell the pilot that it’s crazy to try to fly to Cyprus with a map of Tokyo he will tell you: 'you’re talking high, but did not offer me your own map’. That’s not a valid reason to use wrong maps, nor a valid reason to use wrong risk models.

As I wrote in my previous post “the metrics”, when it comes to estimating the human risk in information security we are sort of clueless. As all IT systems have human elements, it means that all the current risk modules that do not assume worst-case-scenario when it comes to humans elements are inaccurate by definition.

This is also true for “pure” technical systems, which we can devise a statistical models more easily. Take for example S.M.A.R.T. The Self-Monitoring, Analysis and Reporting Technology is used by all hard disk manufacturers as a monitoring system in order to detect and report on various indicators of reliability, in the hope of anticipating failures. In 2007 google who had more than 100,000 hard discs have discovered that a large proportion of the drives that failed did so without giving any S.M.A.R.T. warnings at all, meaning that S.M.A.R.T. data alone was of limited usefulness in anticipating failures. MTBF (Mean Time Between Failure) does not help you when you hard disk crashed two days after you bought it, a day after you transferred all your family albums to it.

Highly complex systems – let the system be financial, electrical, IT oriented or human oriented are systems that follow more chaos theory models than a straight prediction models. As I wrote in “men without hats are living on the edge”, organizations are utilizing repeated processes and they try to optimize it, thus the idea of admitting that their system is chaotic is against the main theme of the system. This is why economists, politicians, IT management and practically all “experts” fail so many times – because they tend to use the wrong prediction methodology.

[youtube=http://www.youtube.com/watch?v=vsO7kzPXO8Y]

The Year of the Cut

She doesn't give you time for questions

As she locks up your arm in hers

And you follow till your sense of which direction

Completely disappears

[Al Stewart, the year of the cat]

Today is the 1st of January 2012, and we started the year with very tough conditions. European leaders have warned of a difficult year ahead, as many economists predict recession in 2012. In the US, the situation is already bad: 1 in 2 people are poor or low-income, the suburbs are collapsing, and I don’t even dare to mention the country deficit. While China seems to bypass everyone else, the problem is that china is extremely fragile due to the speed it evolved in recent years, and the inflexibility it showed handing events like riots in rural areas.

Organizations have been doing everything to become more optimized, via initiatives like LEAN sigma six, CMMI (Capability Maturity Model Integration), Continuous Process Improvement and more. The problem is that the way most organizations used those methodologies caused their organizations to become even more exposed to risk, as most organizations used those methodologies to “cut waste”, initiative which led to “optimization”. What organizations should have invested their energy in was in trying to become antifragile, but as was mention before this is against the nature of most organizations.

What this means is that most organizations are arriving to this uncertain times in a very vulnerable state. They are fragile, and instead of working on becoming antifragile their leadership who has no skin in the game lead them to a disaster.

If you want, as organization, to survive the upcoming turmoil, you have one way to do so – you need to start embracing into your organization people who have skin in the game for the sake of others. Yes, those pesky Saints, Knights, Warriors, Soldiers, Prophets, Philosophers (in the pre-modern sense), Artists, Innovators, Mavericks, Great writers, Rebels, Dissidents, Revolutionaries, listen to your Taxpayers , and above all – hail the Hackers. The more your organization will be made out of those people, the more chance you will survive. Embrace transparency and openness is the way forward.

For us who work in IT antifragility have huge implications. First of all, it questions many of the paradigms risk departments are build upon. Second, it questions the cornerstones of most IT organizations. Take for example IT governance - Does it mean that IT governance is not important? I don’t think so – it just that it’s overestimated due to the fact organization either ignores or not aware of the risks it engraves in the system. It also means that bigger is not only more powerful, but also more dangerous; it means that centralize IT procurement is way more risky than we admit it is, and above all - it should require IT people to stop trying to fight with hackers and instead learn to use their power to make the organization grow. As Taleb say, candles fear the wind, but the fire rejoice for it. Sustainable IT will be achieved when out IT organization will learn how to utilize the power of hackers, instead of tumble because of them. At the end of the day, they might be the best chance of survival they give the organization.

Addendum

I wanted to add one point, which I believe is important. Some organizations (let it be business, governmental, non-profit organizations and even many religions) tend to look at any person or political group or ideology which seems to contradict their core values as a possible source of black swans. Yet the "solution" they have to that is the attempt to try and "end that problem" via many means - some of them polite, some are ugly, and some are pretty much human rights violations. What we can learn from Taleb is that first it does not last - not forever which is pretty much the holy grail of religions (the oldest organizations around). So if some religions didn't made it to the hall of fame let me assure you - no one can promise you that the faith you hold right now will stay around forever. if you think I'm wrong I have one word for you: Pharaohs. And second - you're not going to make your fragile organization/society into robust by taking down those who criticize it. If you do so, all you do is hide risks and increase a bigger risk that individuals who control it will end up causing more damage than if you would have allowed other individuals to object their leaders. The only solution is to turn the organization/society into an antifragile one. But how to do it - well that's a subject to a whole long other discussion on how we can make our societies and organizations antifragile... :)

© All Rights Reserved 2011.